The Death of Authorship

Who Owns What AI Creates, and Why the Law Can’t Answer

In 2011, a Celebes crested macaque named Naruto picked up a wildlife photographer’s camera in the jungles of Indonesia and took a selfie. The image was striking. Naruto stared into the lens with something resembling self-awareness, mouth open, eyes bright, the kind of accidental composition that a human photographer might spend hours trying to stage. The photo went viral. And then the lawsuits started.

PETA sued on the monkey’s behalf, arguing that Naruto should own the copyright to his own image. A federal district court said no. The Ninth Circuit Court of Appeals agreed. The reasoning was simple and felt, at the time, almost obvious: copyright law protects human creativity. Animals cannot be authors. The photo was impressive, sure. But a monkey pressing a shutter button is not an act of authorship, no matter how good the picture turns out.

The case made headlines, inspired debates, became a punchline. And then most people forgot about it.

They shouldn’t have.

Because in March 2026, the United States Supreme Court was asked to answer an almost identical question, except the photographer was a machine. And the answer, while technically the same, cracked open something the monkey selfie never could. It revealed a gap in the legal definition of creativity so wide that the entire future of authorship, ownership, and creative work in America now lives inside it.

The Machine That Tried to Be an Artist

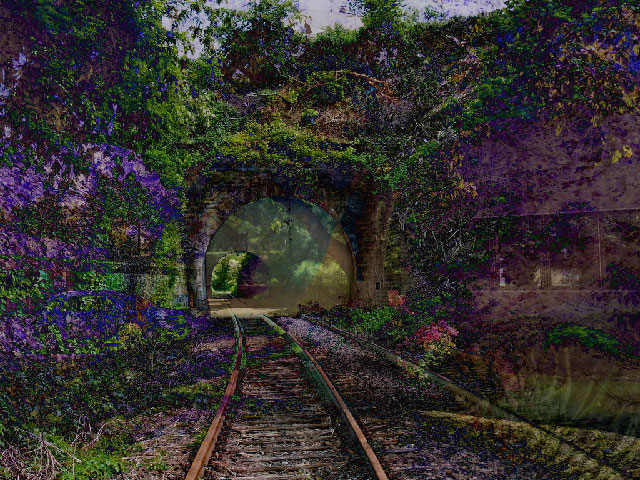

Stephen Thaler is a computer scientist. He built an artificial intelligence system he calls the Creativity Machine. In the early 2020s, the Creativity Machine produced a visual artwork titled “A Recent Entrance to Paradise.” Thaler submitted the work to the U.S. Copyright Office for registration, listing the AI as the sole author.

The Copyright Office rejected the application. Thaler appealed. The case went to federal district court, which sided with the Copyright Office. He appealed again. The D.C. Circuit Court of Appeals heard the case and, on March 18, 2025, affirmed: the Copyright Act requires human authorship as a foundational condition of copyright protection.

The court’s reasoning was thorough. It pointed to the structure of the Copyright Act of 1976 itself, a law written so thoroughly around the assumption of a human creator that its provisions reference widows, children, lifespans, and signatures. Every mechanism in the statute presumes a human being on the other end. The court called human authorship “a bedrock requirement” of copyright registration.

Thaler had one card left. He petitioned the U.S. Supreme Court, arguing that corporations have been recognized as copyright holders for over a century without the statute explicitly saying “human.” The court declined to hear the case on March 2, 2026. No opinion, no dissent, no explanation. Just a quiet refusal to engage.

In legal terms, a denial of certiorari is not the same as agreeing with the lower court. The Supreme Court simply chose not to weigh in. But the practical effect is the same. For now, the legal position of the United States is clear: AI cannot be an author. Works produced solely by artificial intelligence are not eligible for copyright protection.

Which raises the question no one in that courtroom was prepared to answer: if the machine can’t own what it creates, who can? If you’ve ever used ChatGPT to draft an email, asked an AI to generate an image for a presentation, or let a tool like Midjourney help you design something, this question is about you. You just didn’t know it yet.

The Artist Who Made Something and Couldn’t Own It

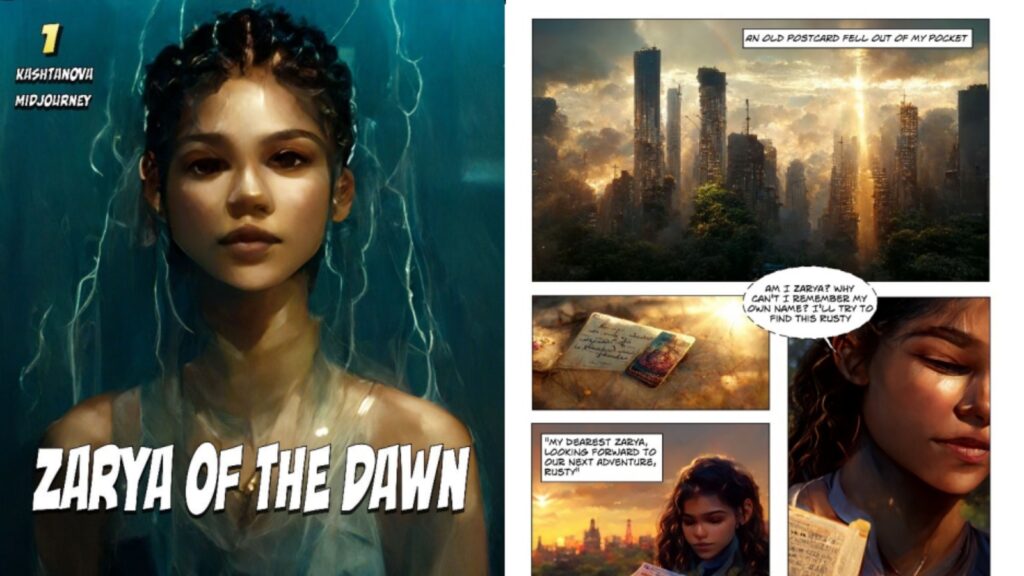

Kristina Kashtanova is not a computer scientist testing a legal theory. She is an artist. She created a graphic novel called “Zarya of the Dawn,” a science fiction story she wrote, designed, and assembled using her own text and images she generated through the AI platform Midjourney. She selected which images to use, iterated on prompts until the visuals matched her creative vision, arranged them alongside her writing, and built a cohesive narrative from the pieces. She did what creators do. She made something.

The U.S. Copyright Office initially registered the work. Then it took a closer look, and partially reversed its decision. The Office granted copyright protection for Kashtanova’s text and for the selection and arrangement of the visual and textual elements. Those were her creative decisions. But the individual AI-generated images received no copyright protection at all.

The reasoning came down to control. The Copyright Office compared Kashtanova’s relationship to Midjourney to a photographer’s relationship to a camera, and found it lacking. Kashtanova may have described what she wanted. She may have refined it through dozens of iterations. But in the Copyright Office’s view, the machine made the expressive choices. The same prompt, entered twice, can produce materially different results. She was closer to a client commissioning an artist than an artist holding a brush.

Think about what this means in practice. Not just for professional artists, but for anyone who has ever used AI to make something they cared about. A small business owner who used AI to generate a logo. A teacher who created illustrations for a lesson plan. A comic book creator who uses AI to generate character designs. None of them can copyright the AI-generated parts of what they made. Take the comic book creator: they cannot copyright the appearance of their characters. Another creator could copy the hero’s look, their costume, their face, and tell their own stories with it. The law would have nothing to say about it. The person who conceived the character, who spent hours refining prompts and selecting the version that matched what they saw in their head, owns the story they wrote around it. They do not own the image.

Kashtanova did the work. She made creative decisions at every stage. And the legal system told her that the parts of her work produced by AI belong to no one.

This is the reality that the Thaler decision created and the Zarya of the Dawn ruling made personal. AI can’t be an author. But the humans using AI to create don’t automatically become authors of what the AI produces, either.

So maybe authorship isn’t dead. It’s just being redrawn around the concept of “creative direction,” and the line between directing and prompting is thinner than most people realize.

How the Law Got Here

The reason the Copyright Office drew the line where it did goes back further than most people would expect. Copyright law has always been a negotiation between technology and authorship. Every time a new tool arrived that could produce creative work, the legal system had to decide whether the tool was an extension of the human or something else entirely.

In 1884 (yes, it goes back this far), the Supreme Court confronted this question with photography. A lithography company called Burrow-Giles argued that a portrait of Oscar Wilde taken by photographer Napoleon Sarony could not be copyrighted because a camera is a machine, and machines do not create art. The Court disagreed. Sarony had arranged Wilde’s pose, selected the costume, designed the lighting, and chosen the composition. The camera captured the image, but the human made every creative decision that mattered. The tool was an instrument. The authorship belonged to the person holding it.

That distinction held for 142 years. Brushes, typewriters, synthesizers, digital cameras, Photoshop. Every new technology got absorbed into the same framework. The tool changes, but the human directs the creative output, and authorship follows from that direction. By this logic, a monkey with a camera couldn’t be an author for the same reason a camera without a human couldn’t: no one was making the creative decisions. The framework held because the question was always the same, and the answer was always the human.

Generative AI breaks the pattern. The Copyright Office used Sarony’s camera as the benchmark when it evaluated Kashtanova’s use of Midjourney, and the comparison is what sank her claim to the images. A photographer controls framing, lighting, depth of field, exposure, subject, angle. A Midjourney user describes what they want and receives a probabilistic output they cannot fully predict or replicate. Sarony was holding the instrument. Kashtanova was describing a destination and hoping the machine found a reasonable route. The legal system sees a meaningful difference between those two acts, and that difference is why copyright law, a framework that successfully absorbed every creative technology for over a century, cannot absorb this one.

The Other Side of the Gap

If AI can’t be an author and the people using AI don’t fully own what it produces, the next question feels like it should have an easy answer. What about the humans whose creative work trained the AI in the first place? The painters, writers, photographers, and musicians whose portfolios were scraped and fed into these systems as training data? Surely they have a claim.

Under current law, they don’t.

This is the part that should bother everyone, not just artists. If you’ve ever posted a photo online, written a blog, shared a story, or published anything on the internet, your creative work may already be part of an AI training dataset. And if it is, you have no ownership claim to anything the model produces with what it learned from you.

Copyright protects the act of creation. It does not retroactively grant ownership to people whose existing creations were consumed as raw material by a machine learning model. The artist whose paintings taught an AI to paint has no legal claim to what the AI produces. The novelist whose books were ingested by a language model has no copyright interest in the model’s output. The connection between their work and the machine’s output is real, it is causal, and it is legally invisible.

So the courts have confirmed that AI-generated work has no author. And the law offers no protection to the human creators whose labor made that work possible. The output sits in a legal no-man’s-land, unowned and unprotected, while the people who built the foundations for it have no seat at the table.

The question worth asking is whether the legal system’s own logic can fix this. The answer, if you follow it through, is no.

We grant creators ownership because the promise of it incentivizes them to create. That is the foundational bargain of copyright. But a machine requires no incentive. It will generate output whether or not anyone owns the result. Granting copyright protection to the person who typed a prompt creates what legal scholar Ezieddin Elmahjub calls a “utilitarian mismatch”: massive legal protection in exchange for negligible creative contribution. We have a labor theory of ownership that says you own what your effort produces. But when you prompt an AI, your effort is directed at steering a probabilistic system, not at making the expressive choices that define the final work. The algorithm makes those choices. And we have the idea that a creative work carries something of its author’s inner world, that a painting or a novel is an extension of the person who made it. AI output emerges from statistical associations across millions of data points. The prompt is an initial condition in a complex system, not the imprint of a person’s will on the world.

Every one of these justifications assumes a human being at the center of the creative act. Remove the human, and they collapse. The output belongs to no one. But it didn’t come from nowhere. It came from the accumulated creative labor of thousands of artists who were never asked, never compensated, and never told. At least the monkey got famous.

Who Wins When No One Owns Anything

Nobody owns the output. But somebody is making money from it.

While we debate authorship and ownership, AI companies are profiting from work that exists in a legal vacuum.

OpenAI has signed licensing deals with the Financial Times, Vox Media, The Atlantic, and Reddit, the last worth a reported $70 million per year. Universal Music Group and Warner Music Group both settled lawsuits against AI music generation companies in late 2025. These agreements are not the result of a clear legal framework. They are the result of litigation risk. It is cheaper to license training data than to fight about it in court for years.

Look at the incentive structure this creates. If the future of AI training runs through licensing deals, the advantage goes to the companies that can afford to pay. Google, Microsoft, Meta, and OpenAI have the capital to license massive datasets. They also control enormous proprietary reserves of user-generated content from their own platforms. Independent creators, small publishers, and individual artists cannot compete in this market. The licensing model emerging outside the courtroom does not distribute creative power. It concentrates it.

The artists whose work trained these systems are not parties to these deals. They are, in a functional sense, the raw material. Their portfolios were scraped, ingested, and used to build systems that now compete with them for the same clients and audiences. A freelance illustrator whose style was absorbed by an image generator has no legal mechanism to share in the revenue that generator produces. A novelist whose books were used to train a language model has no stake in the subscription fees users pay to access it. The value of their creative labor has been extracted, processed, and monetized. The legal system offers them nothing in return.

We should call this what it is. It is a transfer of value from the people who create to the companies that build the machines trained on what they created. And it is happening in the open, in broad daylight, because the law has no category for it. Every time you pay a subscription to use an AI tool, some portion of what you’re paying for was built on creative work that was taken without permission and used without compensation. The product works because other people’s labor is inside it.

What’s Being Fought Over, and What’s Missing Entirely

The most consequential unsettled legal question in this space is whether using copyrighted works to train AI models constitutes infringement or fair use. And the answer, depending on which authority you ask, could go either way.

In May 2025, the U.S. Copyright Office released Part 3 of its AI report, focused specifically on training data. The Office rejected the argument that AI training is automatically protected by fair use. It noted that all major language models, including GPT, Llama, and Claude, were built in part using books obtained from pirated sources. It identified three categories of market harm: lost sales from direct substitution, market dilution from a flood of AI-generated content, and lost licensing revenue. The report stated plainly that commercial use of copyrighted works to produce content that competes with the originals “goes beyond established fair use boundaries.”

But two federal judges in separate cases have found the opposite, ruling that training AI models is “highly transformative” and protected by fair use. The legal landscape is fractured. Courts are reaching contradictory conclusions using the same legal framework.

The highest-profile case, the New York Times’s lawsuit against OpenAI, was still in discovery as of early 2026. A federal judge compelled OpenAI to produce 20 million ChatGPT conversation logs as evidence. Summary judgment is not expected to conclude before April 2026 at the earliest. As of October 2025, over 51 copyright lawsuits were pending against AI companies. No court has issued a definitive ruling. We are years from a final answer.

And while the courts fight over fair use, the bigger structural problem goes entirely unaddressed. There is no federal legislation on AI and copyright. Congress has not acted. The Copyright Office has explicitly declined to recommend new laws granting protection to AI-generated works, arguing that doing so would “undermine rather than further the constitutional goals of copyright.” The framework governing AI creativity in the United States is not being built by legislators. It is being assembled, case by case and deal by deal, by courts, corporate legal teams, and the Copyright Office.

No one knows in advance whether their use of AI will cross the threshold from “creative direction” (protectable) to “mere prompting” (not protectable). The Copyright Office has registered hundreds of works incorporating AI elements where a human exercised meaningful creative input. But the line has no bright rule. It depends on the facts of each case. If you are anyone, artist or not, trying to decide whether something you made with AI assistance will receive legal protection, the honest answer is: nobody can tell you.

Internationally, the picture is no clearer. The United Kingdom has a copyright provision that allows computer-generated works to receive protection. The European Union is moving in a different direction. There is no global consensus. Every jurisdiction is improvising.

And the most glaring absence of all is a compensation mechanism for the artists whose work trained the models in the first place. The academic argument is straightforward: if the goal is to compensate those artists, granting copyright in AI output to the person who typed the prompt is the wrong tool. It creates a windfall for the party with the least creative contribution while leaving the original creators with nothing. The right solution, some scholars argue, would be something like a statutory licensing regime, a direct, targeted mechanism that compensates artists at the source. But no such mechanism exists. No one has built it. No one has introduced legislation to create it. The people whose creative labor powers these systems remain, legally, invisible.

Why This Matters Beyond Copyright

The legal definition of authorship was designed for a world where creative work came from people. It accommodated new tools because those tools remained extensions of human will, instruments that amplified what a person could do but didn’t replace the person doing it. Generative AI is the first technology that produces creative output without a human making the expressive decisions. And the legal system’s response has been to affirm a principle (human authorship is required) while leaving the consequences of that principle entirely unresolved.

The result is a vacuum, and it does not sit empty. Companies that build and deploy AI models profit from output that no one legally owns. Artists whose work built those models have no legal claim to the profits or the output. Creators like Kristina Kashtanova who try to build something new with these tools discover that the law protects some of what they made but not all of it, and the parts it doesn’t protect are the parts the machine contributed, built on the uncompensated labor of other artists. The vacuum has winners. They are not the people who create.

We started with a monkey pressing a shutter button in an Indonesian jungle. We laughed, debated, moved on. Fifteen years later, the same legal question is reshaping how we think about creativity, ownership, and the relationship between human labor and the systems that consume it. The question is no longer whether a machine can be an author. The courts have answered that. The question is what happens to the rest of us when the concept of authorship, the legal foundation we built to protect creative work, can no longer describe how that work gets made.

Copyright law was built to protect creators. It now protects a definition. And the distance between those two things is where the future of creative work will be decided.