Who Decides How AI Goes to War? Right Now, Nobody You Elected.

The Pentagon Blacklisted an AI Company for Saying No. Hours Later, Its Rival Said Yes.

On February 27, 2026, the Trump administration did something it had never done to an American company. It designated Anthropic, one of the country’s leading artificial intelligence firms, a “supply chain risk to national security.” That label is usually reserved for foreign adversaries, the kind of companies caught embedding malicious code into military hardware or funneling sensitive data to hostile governments. Anthropic builds a chatbot. Its crime was refusing to hand the Pentagon unrestricted access to its technology for use in war.

Hours later, OpenAI announced it had signed its own deal with the Department of Defense. Same technology. Same Pentagon. Same week. The terms OpenAI accepted were almost identical to the ones Anthropic had rejected. The timing was, as OpenAI’s CEO Sam Altman later admitted, “opportunistic and sloppy.”

If you’ve followed the headlines, you have a version of this story in your head. Anthropic is the principled company that stood up to the military. OpenAI is the opportunist that caved. Or maybe you’ve heard it the other way: Anthropic is the arrogant startup trying to dictate terms to the United States government, and OpenAI is the pragmatic partner willing to serve its country. Both versions make for a clean narrative. Both versions are wrong, or at least incomplete in ways that matter more than the story itself.

Because the real question isn’t which AI company is the hero and which is the villain. The real question is why two private companies were negotiating the rules of AI in warfare at all, on what authority, under what oversight, and where the people we elected to make these decisions have been while all of this was happening.

The answer to that last question is the one that should bother you most. Congress, the body constitutionally charged with setting the rules for how America wages war, has not passed a single binding law governing the military use of artificial intelligence. Not one. While the Pentagon and Silicon Valley have been haggling over the terms of the most powerful technology since nuclear energy, your representatives have been doing what they do best, which lately seems to be nothing at all.

This is the story of how AI went to war, who’s deciding the rules, and why the people who should be making those decisions are nowhere to be found.

How We Got Here

To understand the fight between Anthropic and the Pentagon, you have to go back to 2017 and a program called Project Maven.

Maven started as a Pentagon initiative to bring machine learning into the military’s intelligence operations. The initial idea was straightforward: use computer vision algorithms to help analysts sort through the enormous volume of drone surveillance footage coming out of the Middle East and Africa. The military was collecting more video than human eyes could process, and it needed software to identify objects, vehicles, and people in that footage faster than any team of analysts could manage. Within a year, Maven was deployed to half a dozen locations, funded at $70 million, and quietly becoming one of the most consequential military technology programs of the decade.

Google was the first major tech company to take the contract. That lasted about a year. In April 2018, more than 3,000 Google employees signed an open letter to CEO Sundar Pichai demanding the company pull out. They argued that building AI for drone targeting violated the company’s founding principle of “don’t be evil.” At least a dozen engineers resigned. Pichai announced Google would not renew the contract.

Palantir, the data analytics firm co-founded by Peter Thiel, picked it up. And Palantir had no such reservations.

By 2025, Maven’s contract ceiling had been raised to $1.3 billion. It had over 20,000 active users across the military. And it was no longer just sorting drone footage. The program had been integrated with large language models, the same kind of AI that powers the chatbots millions of people use every day, to generate intelligence reports, suggest targeting coordinates, and plan military operations. The National Geospatial-Intelligence Agency’s director announced that by June 2026, Maven would transmit “100 percent machine-generated” intelligence to combatant commanders. NATO signed on. Palantir’s stock more than doubled. The company landed a $10 billion Army contract in August 2025, the largest single known contract cap in Palantir’s history.

And the AI model powering a significant portion of Maven’s most advanced capabilities was Claude, built by Anthropic.

The Deal That Fell Apart

In July 2025, the Pentagon awarded prototype contracts worth up to $200 million each to four companies: Google, Elon Musk’s xAI, OpenAI, and Anthropic. The goal was to develop what the military called “agentic AI workflows for key national security missions,” which is a bureaucratic way of saying they wanted AI that could act on its own, making decisions and executing tasks with limited human involvement.

Anthropic took the contract. But it held two lines it refused to cross. Claude would not be used for mass surveillance of American citizens. And Claude would not be used in fully autonomous weapons systems, the kind that can select and engage targets without a human being in the loop. Anthropic wanted those restrictions written into the contract with enforcement power, meaning the company itself could pull the plug if the Pentagon violated the terms. Everything else, Anthropic indicated, was on the table. The company stated publicly that “every iteration of our proposed contract language would enable our models to support missile defense and similar uses.” Intelligence analysis, logistics, planning, communications, cybersecurity, all of it was permissible under Anthropic’s terms. The company was not refusing to serve the military. It was refusing to serve the military without limits.

The Pentagon wanted something different. It wanted “all lawful uses,” a phrase that sounds reasonable until you realize it means the military defines what’s lawful, and that definition can change with the next administration, the next directive, or the next classified memo nobody outside the building will ever read. The Pentagon said publicly that it had “no interest” in using AI for autonomous weapons or mass surveillance, and noted that mass surveillance of Americans is already illegal. But it refused to put those assurances in the contract. Which raises an obvious question: if you have no intention of doing something, why fight so hard against a written promise not to do it?

The negotiations went on for months. By January 2026, the Pentagon ordered Anthropic to grant unrestricted access. Anthropic refused again. Amodei told CNBC that the Pentagon’s threats “do not change our position,” and that Anthropic’s “strong preference is to continue to serve the Department and our warfighters, with our two requested safeguards in place.” On February 26, Emil Michael, the Under Secretary of Defense for Research and Engineering and the Pentagon’s chief technology officer, publicly called Amodei a “liar” with a “God complex” who was “putting our nation’s safety at risk.”

Emil Michael is worth knowing about. Before joining the Pentagon, he was a Silicon Valley executive, most notably as Uber’s senior vice president of business under Travis Kalanick, where he helped raise nearly $15 billion for the company. He had served as a White House Fellow during the Obama administration and as a special assistant to Defense Secretary Robert Gates. He is not a career military official. He is a tech executive who moved into defense policy, and he is not alone. The Trump administration has been pulling Silicon Valley into the Pentagon at an unusual pace. The Army created Reserve Detachment 201, fast-tracking tech executives into military advisory roles, and appointed as lieutenant colonels four people from Meta, OpenAI, and Palantir. The people negotiating how AI gets used in war increasingly come from the same industry that builds it.

The next day, February 27, the Trump administration brought down the hammer. Anthropic was designated a supply chain risk. Federal agencies and military contractors were ordered to cease business with the company. Defense Secretary Pete Hegseth signed the order.

And then, within hours, OpenAI’s deal was announced. Sam Altman had previously stated publicly that he shared Anthropic’s concerns about restricting military uses of AI. The deal he signed told a different story. OpenAI agreed to “all lawful purposes,” the exact language Anthropic had refused. The agreement does include a line stating the AI system “shall not be intentionally used for domestic surveillance of U.S. persons and nationals.” But legal experts quickly pointed out that this restriction is defined by reference to existing Pentagon policies, not by contractual enforcement. If the Pentagon changes the policy, the restriction goes with it.

One legal scholar at the Institute for Law and AI said he was confused about why the Pentagon would accept this language from OpenAI when it had just, as he put it, tried to “nuke” Anthropic for requesting something similar. The difference, it turned out, wasn’t in the words. It was in who gets to enforce them. Anthropic wanted to hold the line itself. OpenAI handed that power to the Pentagon.

Amodei did not take the comparison quietly. In an internal memo to staff that was later reported by TechCrunch, he called OpenAI’s deal “safety theater” and wrote that “the main reason they accepted and we did not is that they cared about placating employees, and we actually cared about preventing abuses.” He called Altman’s public messaging about the deal “straight up lies.” In a separate interview with CBS News, Amodei framed the company’s position in broader terms: “We believe that crossing those lines is contrary to American values, and we wanted to stand up for American values.”

No Heroes, No Villains

It would be easy to turn this into a morality play. Anthropic stood up for something and got punished. OpenAI saw an opening and took it. The government overreacted. Pick your hero, pick your villain, feel something, move on.

But that framing is a trap.

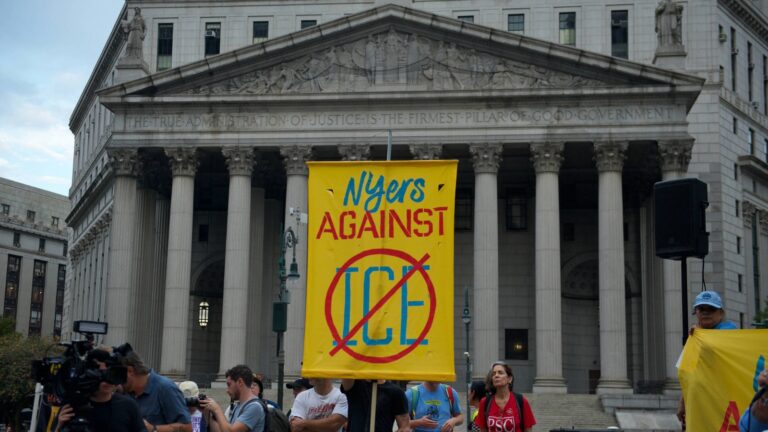

Anthropic’s position on military AI has real merit. The company drew a line at autonomous weapons and mass surveillance, two applications that most ethicists, many military leaders, and a significant portion of the public would agree deserve hard limits. The government’s response, blacklisting an American company using a designation designed for foreign adversaries, is genuinely unprecedented and raises serious questions about retaliation against companies that disagree with the administration’s policy preferences. Employees from Google and OpenAI have publicly backed Anthropic’s legal fight. Anthropic has since filed two federal lawsuits alleging “unprecedented and unlawful” retaliation. As of mid-March 2026 the company is seeking an emergency stay from an appeals court. Enterprise customers are pulling contracts. Anthropic estimates it could lose hundreds of millions to multiple billions in revenue this year.

So yes, something important is happening to Anthropic, and it matters.

But Anthropic is not a public interest organization. It is a company. And while it draws ethical lines on military use, it has also more than doubled its federal lobbying spending over the past two years, rising from $280,000 in 2023 to over $1.1 million per quarter by late 2025. Anthropic-backed Super PACs are pouring money into the 2026 midterms. The company’s growing political spending has coincided with an industry-wide push to keep regulation low, a push Anthropic has not publicly opposed.

OpenAI, meanwhile, went from $260,000 in lobbying spending in 2023 to $2.2 million in the first quarter of 2025 alone. OpenAI insiders and aligned Super PACs are directing over $125 million into the 2026 elections. The broader tech industry pushed hard for a proposed 10-year ban on state AI regulation. More than 450 organizations lobbied on AI issues in 2025, up from 6 in 2016. That’s a 7,567 percent increase in less than a decade.

Both companies have ethical positions on military AI. Both companies are also spending enormous sums to ensure that Congress, the institution that should be setting the rules, stays out of the way on everything else. You can hold a principled line in one room while lobbying to keep another room empty. And that is exactly what is happening.

The Oldest Pattern in Civilization

None of this should surprise us. If history teaches anything about the relationship between human advancement and military power, it teaches that the pattern is unbroken.

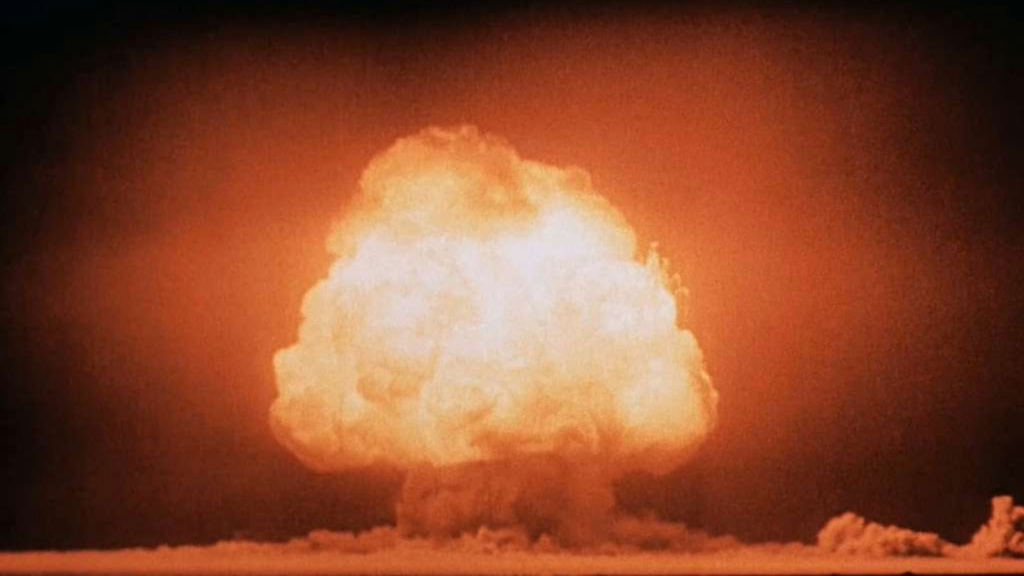

Sometimes the military builds the technology first and civilians inherit it later. The internet began as ARPANET, funded by the Department of Defense in the late 1960s. GPS was a military project from its inception in 1973; civilians didn’t get full accuracy until President Clinton disabled selective degradation in 2000. Other times, civilian breakthroughs get pulled into military use almost immediately. Radar was developed by British scientists in the 1930s and became a decisive military technology within years. Nuclear physics was theoretical research until the Manhattan Project turned it into the deadliest weapon ever built. Drones go back further than most people realize. Britain was building pilotless aerial vehicles in 1917. The U.S. mass-produced them during World War II.

The direction of the flow doesn’t matter. What matters is that it always flows. Scholars at Princeton, Stanford, and in peer-reviewed journals have documented this pattern stretching from the Neolithic period to the present. The consistent finding is that new technologies are adopted for military use as soon as the financial resources and organizational capacity exist to integrate them.

AI is unusual in one respect. Unlike most of the technologies on that list, it was built almost entirely by private companies with no government funding or infrastructure. That changes the power dynamics in ways we haven’t seen before. The Pentagon can’t build its own AI the way it built its own nuclear program. It has to buy it from the people who made it. And those people have opinions about how it should be used, and lobbyists to make sure those opinions carry weight, and shareholders who expect returns regardless of what the ethics team recommends. It is not a question of whether the military will use AI. It is only ever a question of how, and under whose authority.

And that question is the one that matters most right now, because the current answer is: under the authority of whoever happens to be negotiating the contract that week.

The Empty Chair

The fiscal year 2026 National Defense Authorization Act, signed in December 2025, includes a requirement that the Pentagon report waivers of its own autonomous weapons directive to congressional defense committees. It mandates the creation of an “Artificial Intelligence Futures Steering Committee,” co-chaired by the Deputy Secretary of Defense and the Vice Chairman of the Joint Chiefs. It requires the Department of Defense to establish a cybersecurity and governance policy for AI and machine learning within 180 days.

These are reporting requirements, committees, and policy directives. They are not laws. They do not set binding rules for how AI can be used in war. They ask the Pentagon to tell Congress what it’s doing, which is a little like asking the fox to file a report on the henhouse and trusting that the report will be thorough.

When Representative Sam Liccardo of California proposed an amendment to the Defense Production Act that would prohibit federal agencies from retaliating against AI companies over policy disagreements (a direct response to the Anthropic situation), it failed in committee. Liccardo framed the stakes plainly: “The Pentagon’s bureaucrats and lawyers believe they know better. They told Anthropic that if they sought guardrails, they’d blacklist the company as a supply chain threat, preventing any other government agency from buying their software.”

The amendment’s opponents argued that government procurement for national defense “will always entail procurement for uses that someone may have issue with.” Senate Democrats are drafting legislation to codify federal guardrails around autonomous weapons and domestic mass surveillance. Nothing has passed. Nothing is close to passing.

The Lawfare Institute published a piece with a title that functions as its own thesis: “Congress, Not the Pentagon or Anthropic, Should Set Military AI Rules.” The Center for American Progress published a similar analysis. Both argued that the current system, where military AI policy is set through individual contract negotiations between executive officials and private companies, is fundamentally broken. It lacks democratic input. It creates inconsistencies. The rules change with every new administration, every new Pentagon appointee, every new contract.

And Congress, the institution designed to prevent exactly this kind of unaccountable power, has done almost nothing.

You may not have heard much from this so-called Congress lately. They’ve been busy. Raising money. Running campaigns. Appearing on television. What they have not been doing is their job, which in this case means writing the laws that determine how the most powerful technology of the century gets used by the most powerful military on earth.

The Lobbying Problem

Why is Congress so absent? The answer isn’t complicated.

AI companies are spending record amounts on lobbying and political contributions. The largest tech and AI companies spent tens of millions on federal lobbying in 2025, with individual firms like OpenAI spending over $2 million in a single quarter. Super PACs backed by AI industry insiders are directing $125 million into the 2026 midterm elections. The explicit agenda is deregulation: blocking state-level AI laws, opposing binding federal mandates, and pushing for voluntary frameworks instead of enforceable rules.

This creates a cycle that should concern everyone regardless of political affiliation. AI companies argue against regulation. They spend money to elect candidates sympathetic to that position. Those candidates, once in office, decline to regulate. The absence of regulation means that the rules for military AI continue to be set through ad hoc negotiations between the Pentagon and individual companies, negotiations that happen behind closed doors, that vary from company to company, and that can be rewritten the moment a new official takes the job.

Anthropic refuses certain military uses and gets blacklisted. OpenAI accepts nearly identical terms and gets a contract. The difference has nothing to do with law, because there is no law. It has everything to do with who the Pentagon is dealing with and what that particular official wants that particular week.

This is not how a democracy is supposed to decide how its military uses transformative technology. This is how a power vacuum gets filled.

What This Is Really About

AI is not the first technology to raise these questions, but it may be the most consequential. Nuclear weapons prompted the Atomic Energy Act. Chemical and biological weapons prompted international treaties. Even the internet, which grew out of military research, eventually developed governance frameworks (however imperfect) through congressional action and international cooperation.

AI has gotten none of that. And unlike nuclear weapons, which required massive government infrastructure to develop, AI is being built by private companies that are simultaneously the product, the lobbyist, and the contractor. They build the technology. They spend money to influence its regulation. And then they negotiate directly with the military over how it gets used. The public gets to watch, if it’s paying attention, and Congress gets to issue reports.

Claude, Anthropic’s AI, was already integrated into Project Maven. On January 3, 2026, the U.S. military used Claude during Operation Resolve, the raid that resulted in the arrest of Venezuelan President Nicolás Maduro. According to Axios, Claude was used not just in preparation for the operation but during it, integrated through Palantir’s platform to process intelligence in real time. Anthropic declined to confirm or deny Claude’s role, citing classified operations. A senior administration official told Axios that the Pentagon would be “reevaluating its partnership” with Anthropic after the company raised concerns about how Claude had been deployed.

Then came Operation Epic Fury. On February 28, 2026, one day after Anthropic was blacklisted, U.S. Central Command launched strikes against Iran. Admiral Brad Cooper, head of CENTCOM, said American forces hit more than 5,500 targets inside Iran. During the opening salvos, Maven’s AI-driven systems compressed decision cycles from weeks into minutes, enabling over 1,000 strikes in the first 24 hours. This is not a projection of what military AI might look like someday. This is what it looked like two weeks ago, guided by rules that were set in a contract negotiation, not by Congress, not by the courts, and not by you.

The question is not whether AI should be used by the military. History has already answered that. Every significant technology in human civilization has been adopted for military purposes. AI will be no different, and pretending otherwise is naive at best and dishonest at worst.

The question is who gets to set the rules. Right now, the answer is the Pentagon and whichever AI company agrees to its terms. The company that said no got labeled a national security risk. The company that said yes got a contract. And the institution that is supposed to represent you and me in decisions about war and peace and the use of American power has been sitting on its hands, cashing checks from the very industry it should be governing.

Congress has the authority to set binding rules for military AI. It has the constitutional mandate. It has the precedent, having done exactly this for nuclear weapons, for chemical weapons, for surveillance programs. What it lacks, apparently, is the will. And that absence of will has a price. The price is that the rules for AI in warfare are being written by unelected officials and corporate executives in rooms the public will never see, on terms that change with each new deal, with no democratic accountability and no guarantee that what gets agreed to today won’t be rewritten tomorrow.

The Pentagon says it should set the rules because national security demands flexibility. The AI companies say they should have a voice because they built the technology and understand its risks. Both arguments have some merit. But neither the Pentagon nor the AI industry answers to you. Congress does. Or at least, it’s supposed to.

So the next time you hear about the drama between Anthropic and OpenAI, about who’s the hero and who sold out, remember that the story everyone is telling you is a distraction from the one that actually matters. The seat at the table that belongs to the American people is empty. And every day it stays empty, someone else fills it.

Very good i like it

wild

wish you all the best